Guidance for safer AI-enabled medical devices

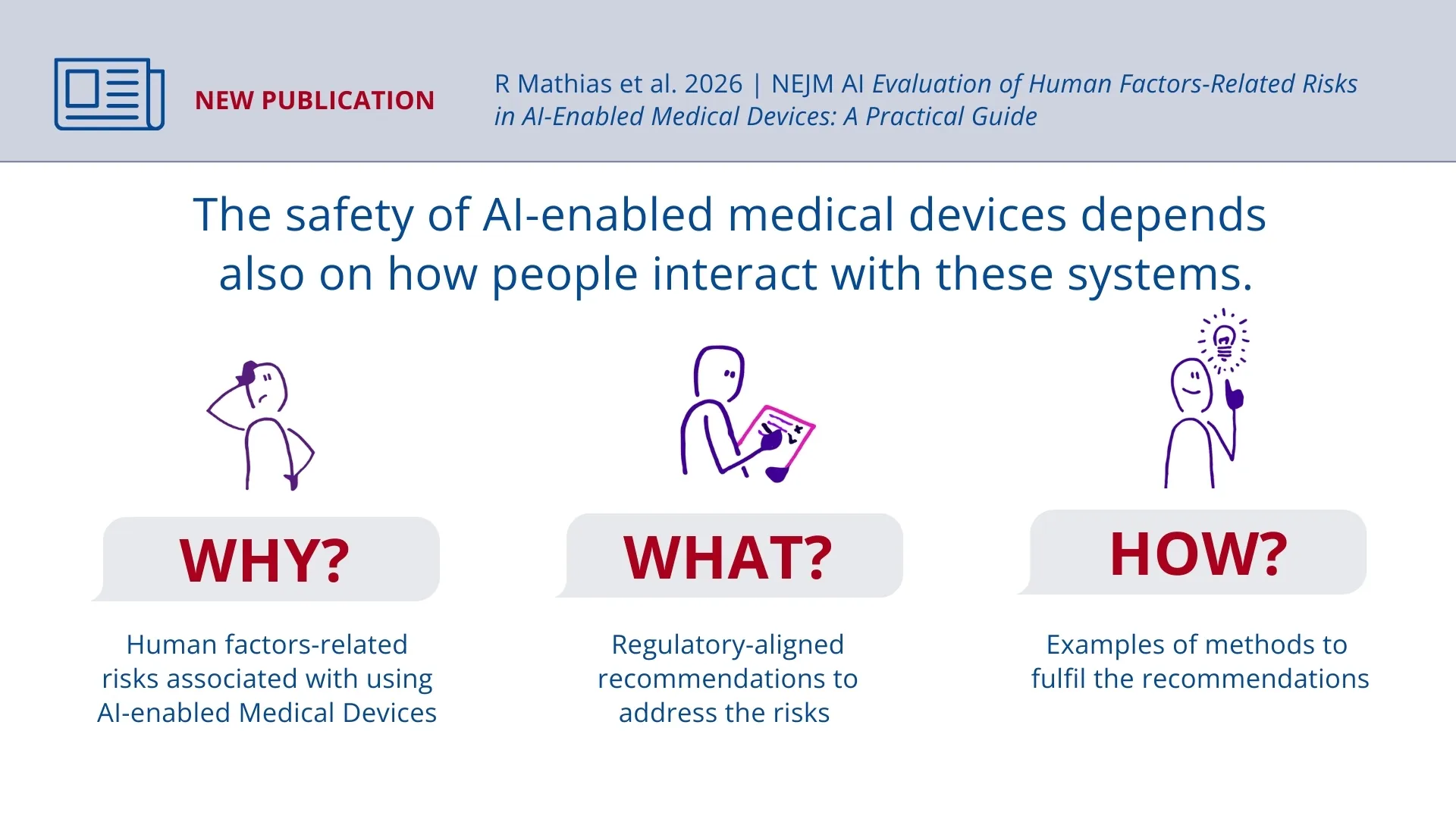

AI-enabled medical devices promise improved medical care and support for healthcare professionals. However, the safety and performance of such systems not only depends on algorithms or technical specifications. It is equally important how people use these devices and applications. In a recent publication in the scientific journal NEJM AI, a research team led by Prof. Stephen Gilbert from Else Kröner Fresenius Center (EKFZ) for Digital Health at TUD Dresden University of Technology systematically analyzes risks that can arise in human-AI interactions and makes recommendations for manufacturers and regulatory evaluators.

The authors show that existing regulatory requirements for approval have so far only partially addressed many of these so-called “human factors-related risks”. This can create gaps that impact the safety and quality of care. To address these, the researchers identify seven key risks and develop practical recommendations for action that can be integrated into existing regulatory and documentation processes.

Risks in the use of AI systems

AI-based medical devices can be used in various areas of clinical environments. In radiology, for example, they assist in detecting cancer. Clinical decision support systems help select personalized therapies for patients. AI can also support real-time monitoring and early warning systems, as well as chatbots for applications such as patient communication and software that automatically generate medical reports or summarize findings. The analysis focuses on risks that may arise in the practical use of such AI systems. These include, for example, an increased likelihood of outputs being misunderstood or misinterpreted due to the sometimes-opaque nature of AI systems. Problems can also occur when trust in the application is miscalibrated: resulting in users either relying too heavily on AI assistance or ignoring relevant recommendations. The researchers also point to the risk of automation bias: the tendency to uncritically adopt recommendations from automated systems, potentially overlooking errors or forgoing independent judgment. Additional risks include potential deskilling, technostress among users, an unchecked expansion of indications beyond the originally intended scope (indication creep), and errors related to system changes or different operating modes. Such factors can create additional burdens or unexpected failures in clinical practice – even when the technical performance of a system itself is strong.

A practical guide for manufacturers and evaluators

For their analysis, the research team evaluated existing standards on usability and safety, regulatory guidelines, alongside the scientific literature on AI in healthcare. In addition, expert discussions from the fields of clinical application, regulation, and human factors were incorporated. The result is a practical guide, that fills a gap in current standards, with seven recommendations. These are intended to support manufacturers and evaluators both before and after a product is placed on the market. The aim is to identify AI-specific risks in interaction with human users at an early stage and to address them systematically.

The framework recommends developing and deploying AI-based medical devices in a way that clearly defines the users, in which context the systems are applied, and which tasks are assigned to humans and which to the system. Furthermore, results should be presented in a way that is easy to understand, integrated into existing clinical workflows, and supplemented by training where needed as well as safe fallback options in the event of system failures. The authors emphasize the importance of continuous monitoring after market entry. Usage patterns, potential misuse, or overreliance on AI systems should be systematically observed and corrected as needed. Changes to the systems must also be communicated transparently so that work processes can be adjusted accordingly.

The recommendations are deliberately formulated in general but regulatory-aligned terms so that they can be applied to different AI-enabled medical devices and application scenarios. In a next step, the researchers aim to test and further develop their recommendations based on concrete pilot applications with AI-enabled medical devices. In the long term, human factors should be systematically considered in the regulation and evaluation of AI-based health technologies – reducing avoidable risks while supporting safe innovation in medicine.

The article was authored by researchers from TU Dresden (EKFZ for Digital Health, Chair of Industrial Design Engineering, and Faculty of Business and Economics), in collaboration with experts from the University of Oxford (United Kingdom) and Geneva University Hospital (Switzerland).

More News

AI model to predict liver cancer risk

EKFZ Spin-Offs Secure Major Investments for AI-Based Cancer Diagnostics